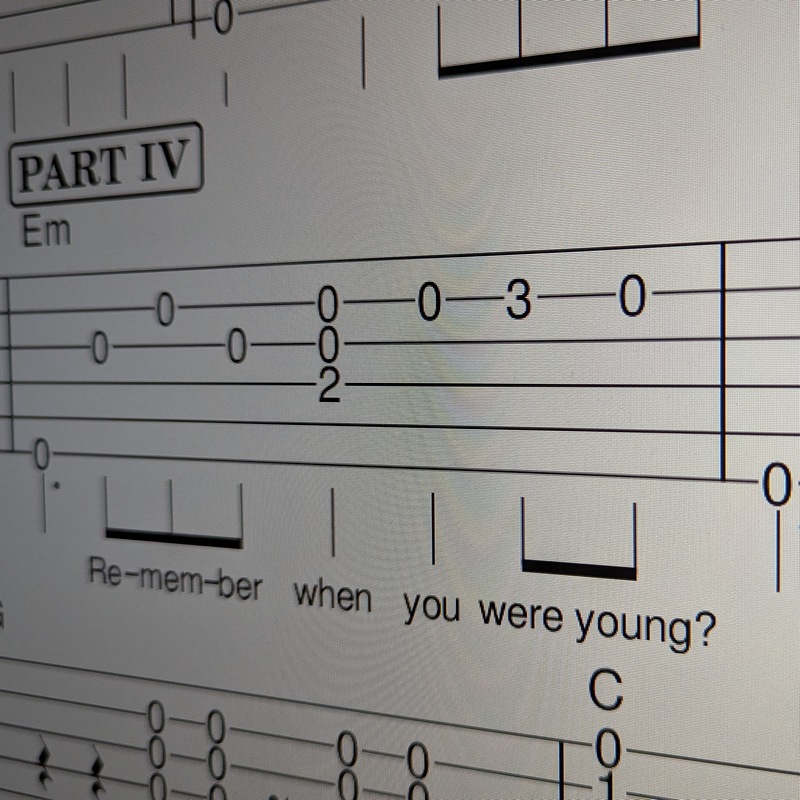

...20 years just clicked over last week - 28th May 2006 was the date of my first video upload. I didn't think I was an early adopter, YouTube had been around for a year. But in hindsight I was an early adopter. I was ten years earlier than Rick Beato who just celebrated his ten years (I just watched his video). We had the same reason for using YouTube - it was an easy method to share videos with somebody. In my case it was for a fingerstyle forum (doesn't exist anymore). In 2006 digital videos were definitely a thing but not as easy as the tide of smart phones rose. I had bought a Sony point and shoot camera capable of grainy video when my first daughter was born late 2003, in between hundreds of baby videos there are some of me recording myself playing guitar right back to 2004, so I was not stranger to it.

I never quite had that "viral video" moment like Rick did, however I had a lot of traffic - when I put out Canon in D it was getting thousands of views a day, the peak was 2010 when across my channel I was sitting at 7,000 daily views - enough to push Canon in D over 3 million views in those few years. Unlike Rick however, I didn't have much to offer - just an amateur fingerstyle hack (my original videos show the classic early stages of being a guitarist - it's just about moving your fingers fast into the next position and pluckin' them notes...it's missing musicality.) I never built anything around my YouTube channel, just arranged songs and played them, slowly got better while an ever-decreasing number of people watched. Not a problem, was never about being a star, was about sharing a hobby I was passionate about.

The stats - the early days show the rise - more and more people discovering YouTube. Then the fall - more and more people posting on YouTube. Those people, especially talented musical fingerstyle players, far eclipsed the increasing rate of people using it, hence the great rise and fall of my channel. In the later years it's the algorithm that decides who is going to see what - you can see a few blips along the way as events occurred, the one I remember the most was when Ennio Morricone died and a heap of people watched my Ennio video (I actually have two).

Black line = total views, Blue line = Canon in D, Green line = Here Comes The Sun.

Going forward? There is no "what happens now", I've always been doing the same thing! I get inspired to arrange a song, or something musical I'm interested in, and I record a video. Even back in 2008 I was talking about playing for audiences and how recording a video for YouTube is the next best thing, it forces you to finish a project - don't be a half-song playing guitarist. No more to say, I talked about YouTube statistics back in 2013 and not much has changed. I talked about how creators are at the whim of The Algorithm and not much has changed with that either.

So business as usual. I do have a backlog of songs to record but I'm not as single mind focused on just recording arrangements as I used to be...but that's okay. Just get out there and create - don't leave it to The AIs to do!